System Architecture and Secure Content Management

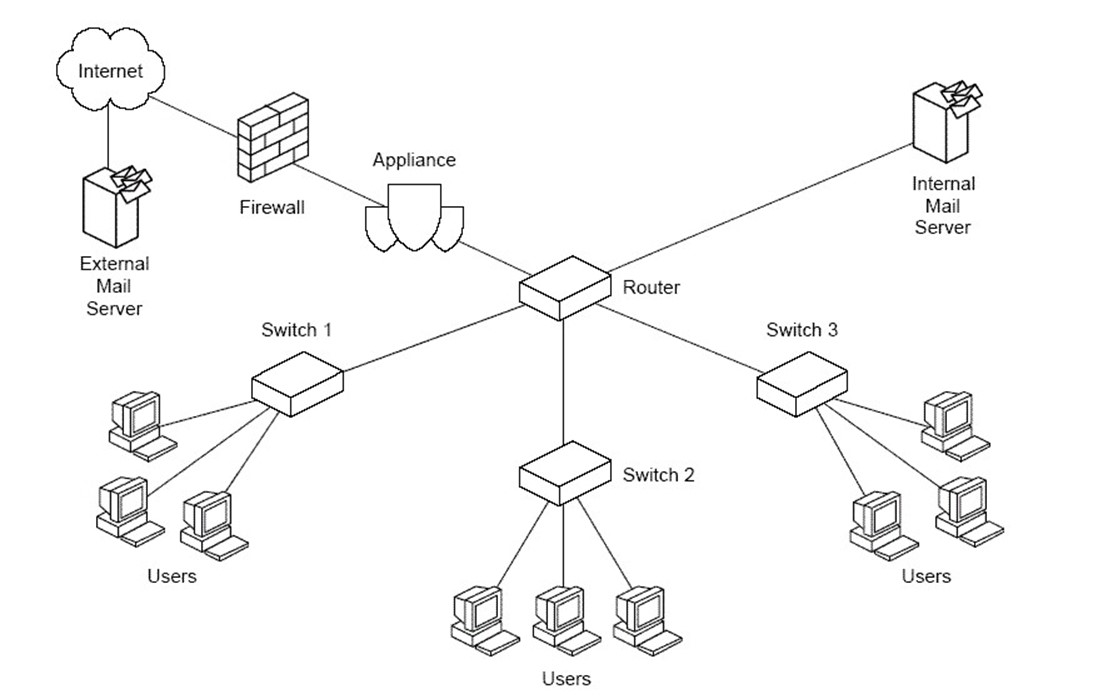

Where should a secure content appliance be placed?

Secure content appliances are used to control what is allowed to enter and leave an organization's network. It follows logically that the device should be located on the perimeter of the network. Perimeters can use a single layer of defense with a single level of firewalls that block ports and filter network traffic at the lower levels of the OSI network model (see question 1.1 for more information about the OSI network model). A common configuration creates a multi-level perimeter known as a DMZ (de-militarized zone).

DMZs use multiple network segments to create three zones: the external zone, which includes the Internet; the internal zone, which includes an organization's network, servers, desktops, and other devices accessible to the internal network; and the DMZ, which lies between the internal and external zone.

Typically, the Web servers are located in the DMZ. These servers need to be accessible from the Internet but also protected from it. Applications running on the Web server invoke applications and services running on servers on the internal network. For example, an application running on the Web server may query a customer database, located in the internal zone. Internet users have no direct access to the database server; all interaction is through a proxy application on the Web server. This configuration balances the need for accessibility with the need for controlled access to the mission-critical servers (see Figure 3.1).

Figure 3.1: DMZs provide additional protection of mission-critical servers by limiting access to those servers to trusted proxies in the DMZ.

Secure Content Device Operational Modes

In addition to the existing configuration of a network, a secure content administrator needs to consider how the device will be configured. There are three options:

- Explicit proxy mode

- Transparent router mode

- Transparent bridge mode

The choice of mode determines whether other devices are aware of its use, how the secure appliance is configured and connected to the network, and how much configuration work is required during installation. The focus here is on how the device is connected to the network.

Explicit Proxy Mode

In explicit proxy mode, servers that communicate with the secure content device are configured to communicate directly with the device. For example, incoming mail is routed from the Internet to the secure content device and then passed to the internal email server. When in explicit proxy mode, only HTTP, SMTP, POP3, and FTP traffic should be routed to the secure content device; all other protocols are refused by the device.

In explicit proxy mode, administrators have great flexibility in placing a secure content server. The one configuration rule is that the secure content appliance must be located behind a firewall; other than that, the device can be placed anywhere in a DMZ or internal network.

The reason for the flexibility in positioning the devices is that other devices that use the secure content device are configured to explicitly send traffic to and receive traffic from the device. For example, in a switched network, the secure content device can be connected to any router or switch in the network. Although, the device can be placed anywhere in the network, some positions will still be better than others.

Consider the flow of traffic. As all incoming and outgoing email and Web traffic will pass through the device, it should be located in a segment that minimizes additional traffic. For example, if an email server and Web server are in the same network segment, it makes sense to position the secure content device there because traffic will eventually flow into that segment to reach the email and Web servers.

Also consider the bandwidth utilization on a segment. If network traffic is near capacity on a segment, adding a secure content device to that segment will only increase the network load.

Transparent Router Mode

In transparent router mode the secure content device acts as both a content filter and a router. As in explicit proxy mode, the secure content device should be behind the firewall.

In transparent router mode, clients are not reconfigured to send and receive traffic to and from the secure content device. Only the firewall and other routers must be reconfigured to direct traffic to the secure content device. This setup eliminates the need to change client configurations but it does limit options for placing the device.

It is recommended that a secure content device in transparent router mode be placed between the firewall and a router. In cases in which the secure content device is the only router, it should be placed immediately after the firewall.

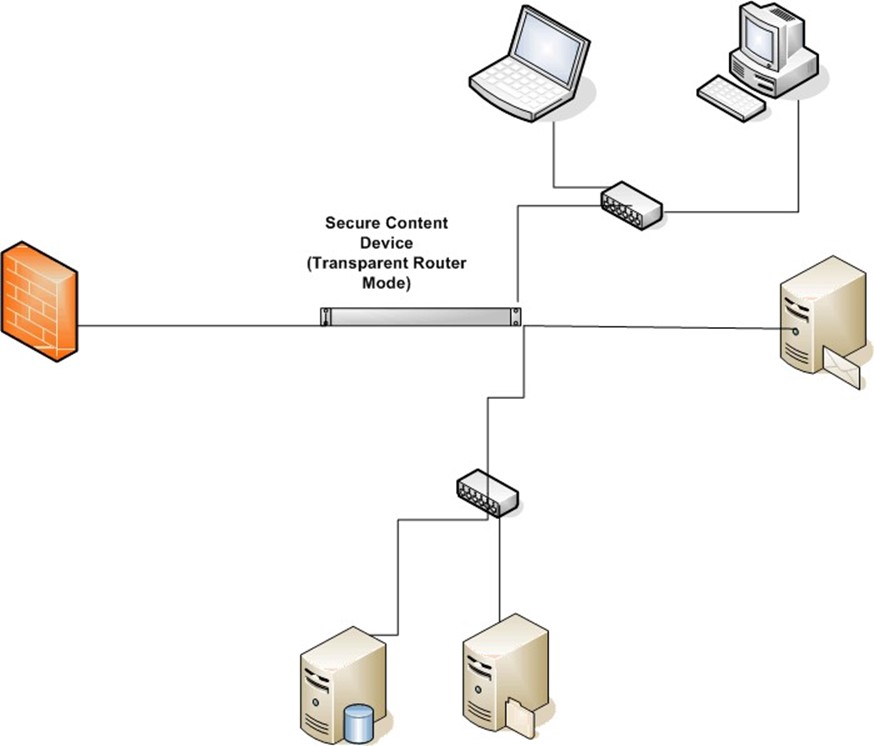

Transparent router mode is recommended for networks that use firewall rules to control traffic. In explicit proxy mode, packets are redirected to the secure content device making the client's IP address unavailable to the firewall rules engine (see Figure 3.2).

Figure 3.2: In transparent router mode, the secure content device functions as both a content filter and a router.

Transparent Bridge Mode

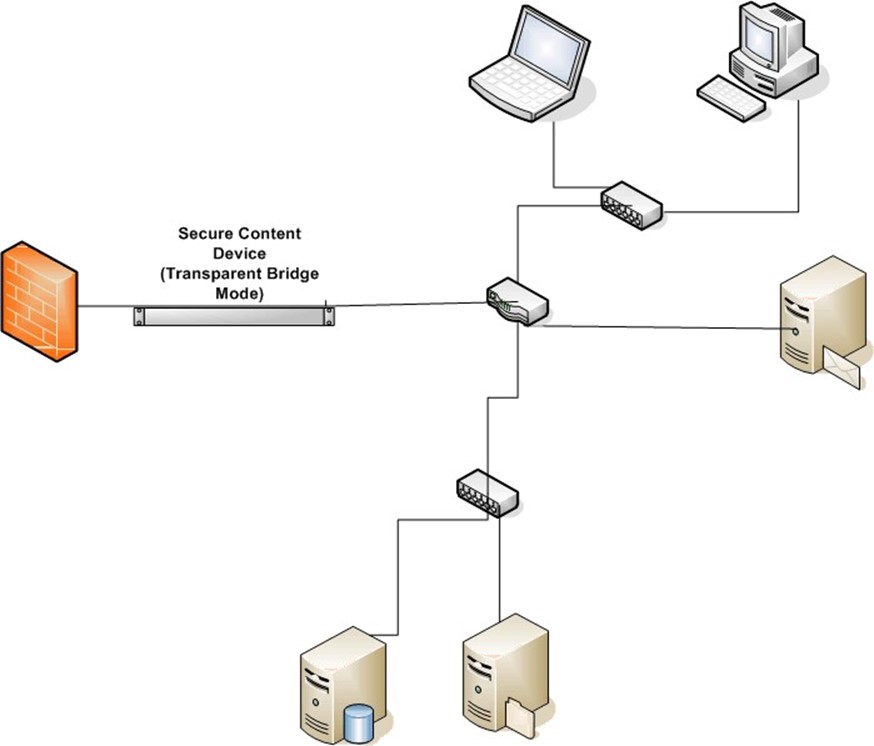

Transparent bridge mode is similar to transparent router mode, but simpler. As Figure 3.3 shows, the secure content device does not route traffic, it simply passes traffic between two network segments. No clients, firewalls or routers must be reconfigured. In transparent bridge mode, the secure content appliance should be placed between the firewall and the router.

Figure 3.3: In transparent bridge mode, the device joins two segments of a network and passes traffic between the two without routing.

Secure content appliances should be placed inside a well-configured firewall. Administrators should also determine which mode the appliance will use. Transparent bridge mode is the easiest to configure; however, all traffic will pass through and be filtered. In high-traffic networks, you may find better performance by configuring the appliance in explicit proxy mode and sending only relevant traffic (for example, HTTP, FTP, SMTP and POP3). Of course, if the routing features of the appliance are used, the appliance should be placed at the junction of two or more network segments.

Why are desktop antivirus software and personal firewalls still needed?

There are two primary reasons for deploying desktop antivirus software and personal firewalls on networks that contain secure content devices:

- No security device can address all potential security threats; multiple layers of security are required to maintain network and system integrity

- Mobile devices are not protected by a secure content device when they are not connected to the network

All organizations are subject to the limits of any single security technique or tool and a growing number are faced with the challenge of managing and securing mobile devices.

Layered Security

The principal of layered security dictates that multiple forms of countermeasures are required to ensure the integrity of a network and related infrastructure. This approach is also known as defense-in-depth—security measures are placed throughout the network on the perimeter, servers, workstations, and mobile devices. Defenses also operate at different levels of the network; for example, network firewalls can filter based on protocols and ports and application firewalls can examine XML message content to identify invalid or unauthorized messages.

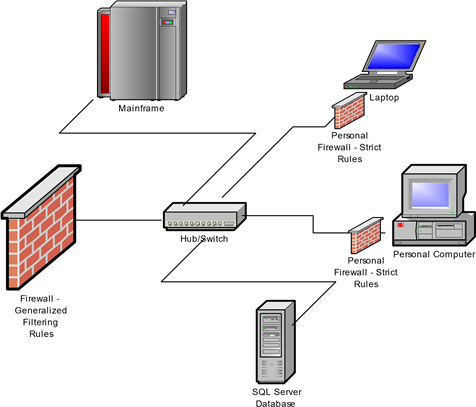

Example: Firewall Rules

Take a simple example. A company's network includes an IBM mainframe, a Microsoft SQL Server system, and a number of desktop and laptop Microsoft Windows clients. Let's assume the mainframe and SQL Server support business partners in a supply chain by providing access to parts and inventory information. Many users within the organization do not need access to those applications but their desktops and laptops are connected to the same network so they are exposed to the same traffic. By installing personal firewalls on those devices, the network administrators can block traffic on ports that must be open on the network but are not used locally. This action provides an extra layer of defense against threats that are propagated on those protocols or ports (see Figure 3.4).

Similarly, if a device were to become infected with a blended threat that included a keylogging program, a personal firewall could prevent the transmission of information over, for example, an Internet Relay Chat (IRC) protocol. There may be legitimate reasons for others to use that protocol, so the network firewalls would allow the traffic through. Using a personal firewall, one can configure finer-grained rules and better protect information flow.

Figure 3.4: Defense-depth allows for both course-grained security for the overall network and fine-grained filtering localized to systems that require it.

Example: Antivirus Protection

Another example of the benefits of layered security involves the use of encryption. To ensure the privacy and integrity of messages, email users can encrypt a message and apply a digital signature to an email. Encryption scrambles the message so that it may not be read in transit to its destination. A digital signature is a string of characters appended to a message that is generated using a hash algorithm and a code known as the sender's private key. Upon receiving the message, the receiver uses another code, called the sender's public key, to decrypt the message and recalculate the digital signature. If the calculated signature matches the one in the message, the recipient knows the message is authentic and has not been tampered with.

Digital signatures and encryption are well-designed to meet the needs of privacy and integrity, but what happens when a virus is attached to a protected message? Secure content managers have a few options:

- The secure content appliance can reject the email and not deliver it to the recipient.

- The secure content device can remove the virus and send the rest of the message to the recipient. In that case, the content of the message has changed, so the recipient will not calculate the same digital signature; he or she will not know if any change other than removing the virus has occurred.

- The administrator can leave the virus embedded in the message and have a desktop antivirus program detect and remove the malware.

None of these options is ideal. Administrators must choose between denying a service to a user (either reliable delivery of email or message integrity checks) or allow a known piece of malware into the network (see Figure 3.5).

Figure 3.5: Encrypted, digitally-signed emails are sometimes better handled by desktop antivirus than by network-level scans.

By combining countermeasures at different points in the network and using multiple types of security tools, network and security administrators have more options for configuring security mechanisms that allow them to accommodate the needs of users and applications within the network. This type of layered defense also provides multiple points of protection should a single point become compromised. However, even within a well-secured network, mobile devices present security challenges.

Securing Mobile Devices

One of the advantages of using a network appliance for securing content is that any content entering or leaving the network is protected. Unfortunately, network devices do not always stay put. Laptops and PDAs with network access pose a particular threat to network security because they are allowed to physically disconnect and reconnect to the network, often at will.

Consider how easy it is to circumvent perimeter defenses with mobile devices. Imagine an employee who is blocked from browsing his favorite music sharing site during his lunch hour, so he decides to disconnect his laptop from the internal network, walk across the street to the local coffee shop with a WiFi hotspot, download music files (and unknowingly, some spyware), then return to the office to reconnect to the network. Spyware, that would have been blocked by the antispyware mechanism in the secure content appliance had the appliance not blocked the URL (another example of a layered defense) is now within the organization's network.

A single point of detection and prevention is not sufficient with mobile devices. As mobile devices will not always have the security services of the network available to them, these devices must have local versions of antivirus, anti-spyware, and personal firewalls.

For additional protection of mobile devices, consider a third-party tool such as McAfee ePolicy Orchestrator, which can ensure devices remain in compliance with security policies. If a device is changed and no longer in compliance, a third-party tool can enforce compliance and updates as well as notify administrators when threats or rogue systems attach to the network.

Layered security is considered a best practice among security professionals and should be practiced to levels appropriate to an organization's needs and capabilities. A secure content appliance adds a layer of protection and in fact uses multiple layers within itself (for example, malicious content missed by URL blocking can be caught by antivirus software). Mobile devices by their nature circumvent perimeter defenses such as firewalls and secure content devices. They must have their own localized security tools in addition to those available on the network.

How does a secure content appliance work with Web servers, caching servers, and application servers?

There are many secure content appliances that provide content filtering services. Like other servers in a distributed networking environment, the solutions use common protocols to communicate with other servers. Key topics to consider when introducing a secure content appliance are:

- What protocols are used on the network to be protected?

- Where should the appliance be positioned for maximum protection?

- How will the secure content appliance affect overall system performance and functionality?

Network Protocols

The Internet uses several protocols, or standards for communication, but not all of them are relevant to securing content. For example, the low-level Open Shortest Path First (OSPF) protocol used by routers is not subject to content filtering. The most important protocols from a content filtering perspective are:

- Hyper Text Transfer Protocol (HTTP) used by the Web

- File Transfer Protocol (FTP)

- Simple Mail Transfer Protocol (SMTP) used to send email messages between servers

- Post Office Protocol 3 (POP3) used to retrieve email from servers

Firewalls generally allow traffic using these protocols to pass in and out of a protected network. Therefore, a secure content appliance would have to be configured with policies defined for each protocol to ensure maximum protection. In addition to defining policies, the level of protection is also dependent on how the secure content appliance is positioned in the network and what traffic is analyzed.

In some cases, a systems administrator may want to scan all HTTP, FTP, SMTP, and POP3 traffic as soon as it passes through the firewall. In other cases, there may be high volumes of traffic to a program running on an application server that need not be analyzed. For example, the traffic may be an XML data exchange between two managed servers, so the content is well understood and filtering it would just put an additional, unnecessary load on the appliance.

Positioning the Secure Content Appliance

The position of the secure content appliance will depend, in part, on which operational mode is used. There are three operational modes:

- Explicit proxy mode

- Transparent router mode

- Transparent bridge mode

Explicit Proxy Mode

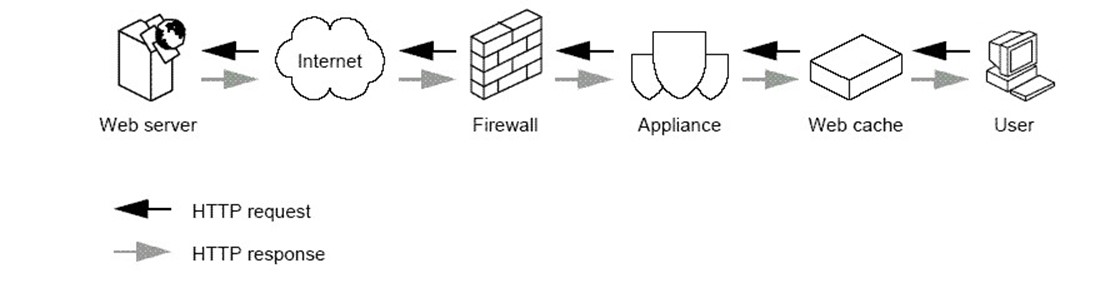

In explicit proxy mode, network devices are configured to send traffic directly to the secure content appliance. The appliance in this mode acts as a proxy processing traffic for other network devices.

This mode is used, for example, if only SMTP traffic is filtered. In that case, external mail servers would be configured to send mail messages to the appliance, which would filter the traffic and then forward it on to the internal mail server. Similarly, explicit proxy mode could be used to scan HTTP traffic by using the secure content appliance as a proxy for Web servers.

The advantage of this mode is that systems administrators can target a subset of all network traffic for filtering and avoid unnecessary processing by the appliance. One relative disadvantage of this approach is that it requires additional work on the part of the systems administrators to configure servers to explicitly send traffic to the secure content appliance.

As Figure 3.6 shows, in explicit proxy mode, the position of the appliance is determined more by traffic patterns across network segments than the need to have the appliance in a particular position. As devices are configured to send traffic to the appliance, it can be positioned virtually anywhere on the network. Of course, the secure content appliance should still be positioned behind a firewall.

Figure 3.6: In explicit proxy mode, the appliance can be placed anywhere in the network because traffic is routed as needed to the appliance.

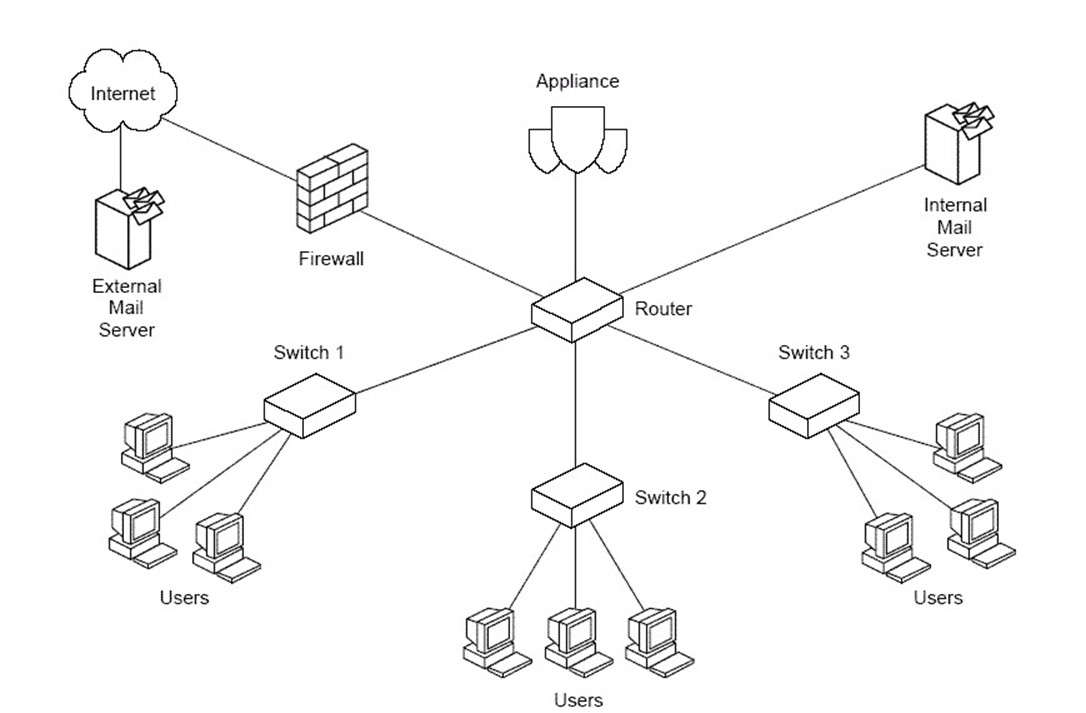

Transparent Router Mode

In transparent router mode, the appliance acts as a router as well as a content filter. Other network devices do not need to be configured to explicitly send traffic to the device unless it is also acting as your default gateway. The appliance should be placed just inside the firewall so that all traffic entering or leaving the network is scanned. The content filtering is done transparently to the devices generating traffic. When in transparent network mode, the appliance routes traffic between two networks.

Transparent Bridge Mode

In transparent bridge mode, the secure content appliance joins two physical networks, allowing them to be treated as a single network. No routing is performed. This setup is a simpler configuration than transparent routing model and requires less configuration. Like transparent router mode, in transparent bridge mode, the appliance should be positioned between the firewall and other network devices (see Figure 3.7).

Figure 3.7: In both transparent bridge mode and transparent router mode, the secure content appliance should be placed just inside the firewall.

Configuring for Performance and Functionality

Maintaining acceptable performance levels is a major concern in many network environments. Tools, such as caches and load-balancing hardware, are often introduced to compensate for increasing demands on network devices. Caches improve performance by locally storing frequently used content so that the same content is not constantly retrieved from its source Web site or database. When processing loads on applications servers increase, load-balancing hardware is sometimes used to divide the workload between multiple applications servers. In both of these cases, a secure content appliance can still easily fit into the network to provide the content scanning functionality needed. As Figure 3.8 shows, the secure content appliance can be positioned between the firewall and Web cache so that any content stored in the cache has been filtered.

Figure 3.8: The secure content appliance is placed between the intranet firewall and the Web cache to ensure content that reaches the cache has been appropriately filtered.

In situations in which load-balancing hardware is used with application servers, the secure content appliance is placed prior to the load-balancing device in the traffic flow.

The secure content device is designed for load sharing. In cases in which traffic and performance demands are so high that a single appliance cannot meet performance needs, multiple appliances can be configured in a load-sharing arrangement. In this configuration, a master appliance receives all traffic and then passes it to load-sharing devices, which perform single functions, such as virus and spam scanning. This division of labor allows for a more simplified configuration than had traditional load-balancing techniques been used with multiple appliances.

There are multiple ways to configure the secure content appliance to work with other network devices. By considering the protocols to filter, the devices which require filtered traffic, the routing services required, and the performance demands on the network, systems administrators can find an optimal configuration of the secure content appliance with other network devices.

Can a secure content appliance be attacked?

A widely held belief in the security community is that any device can be compromised if a group of skilled perpetrators has the time, resources, and desire to break in. However, some security countermeasures, such as encryption with very long keys, can take years and massive computing resources to break. As a result, most security measures are designed to keep attackers and others at bay for a long enough time for the attempts to be discovered or to raise the cost of breaking in to a level so high that the value of the information stolen is no longer worth the cost of retrieving it.

There are certainly incentives to attacking a secure content appliance. For example, if an attacker were able to compromise the secure content appliance and change the virus scanning policy for the Hyper Text Transfer Protocol (HTTP), the attacker could deliver to a device spyware that includes a keylogger. If the antivirus scanning level of a global policy could be reduced, a blended threat could be transmitted, which could exploit vulnerabilities in database applications and steal private or company confidential information. Like firewalls, a secure content appliance is a front-line safeguard; unlike firewalls, though, secure content appliances perform complex analysis well beyond the abilities of a firewall. Breaking through a secure content device can be much more advantageous to an attacker than compromising a firewall. Clearly, there is no shortage of motive to attack a secure content device.

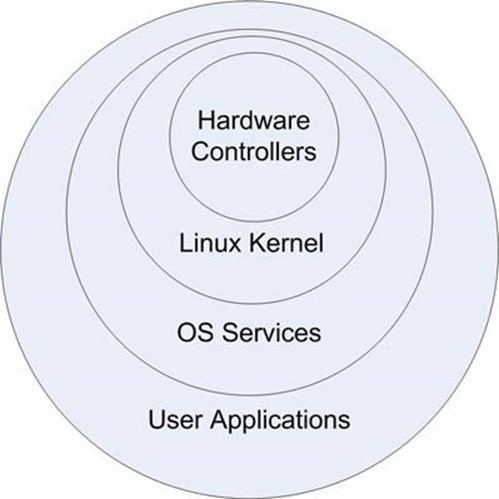

Security and Operating System Architecture

Sound security begins with sound design. The Linux operating system (OS) used in some thirdparty secure content appliances is based on a ringed architecture, as Figure 3.9 shows.

Figure 3.9: The Linux OS uses four major subsystems that provide a ringed architecture.

The purpose of this type of architecture is to isolate critical functions, such as process scheduling and memory management, from user programs that may contain errors or malicious code. Each subsystem is designed to perform specific tasks. The Linux kernel manages five main tasks:

- Process scheduling

- Memory management

- Virtual file system

- Network interface

- Interprocess communications

The kernel depends upon hardware controllers to provide some services and in turn provide services to the next layer, OS services. Only the kernel has access to hardware features related to memory and processing. Users may not change code in the kernel. OS services provide file system and window management services, which are used by user applications, such as databases, Web servers, and other applications.

By separating duties between levels, the system is protected from malicious code while still allowing programmers to invoke OS services as needed. For example, an application can make a request to write a block of data to a file system and may even specify exactly where in a file the data block is to be written, but the application may not specify a location using disk geometry (such as the track, cylinder, and sector of a disk). The kernel hides those details behind the virtual file system that is further abstracted by the file system in the OS services layer. The benefits of this type of protection become clear when you consider the potential impact if it were missing.

An early form of computer virus was the boot sector virus. These viruses write to specific areas of disks, known as Master Boot Records (MBRs), which contained OS files and code. By changing critical code used to manage the disk, a virus writer could control the behavior of the disk.

A ring architecture, such as used in Linux, provides a well-established and effective mechanism for preventing disruption of critical OS functions from most malware. Although users and attackers can add programs, even ones with malicious code, isolating these programs minimizes the risks that malicious code can disrupt core services.

Hardening an OS

Hardening an OS consists of several steps:

- Shutting down unnecessary services

- Patching the OS and services

- Configuring services to reduce vulnerabilities

Like other areas of security, no one of these steps is enough to protect a server, but in concert these steps can significantly reduce the risk of exposure to a security breach.

Shutting Down Unnecessary Services and Removing Unneeded Programs

Linux distributions provide a dizzying array of applications and utilities including compilers, graphical interfaces, databases, Web servers, communications programs, multimedia systems, personal productivity packages, file transfer programs, windows managers, and more. Very few of these are necessary to perform content filtering. In the best case scenario, installing these services simply consumes disk storage; in the worst case scenario, they introduce vulnerabilities.

Take a compiler for example. The source code for Linux and other open source systems is readily available on the Internet. If an attacker could introduce a piece of code onto the server and then recompile the program, the attacker could compromise a server regardless of the hardware platform. Simply removing the compiler in this case would ensure the server could not be compromised in this way.

In other cases, an attacker does not even have to introduce a vulnerability—it exists already. For example, programs that do not perform range checking are subject to buffer overflow attacks. During these attacks, the overflow either disrupts the functions of a program or can facilitate the introduction of new code during the program execution. The new code changes the behavior of the program to perform some malicious action, such as acquire root access and copy the password file to an ftp site controlled by the attacker. Needless to say, if the program with the vulnerability is not running, the attacker cannot exploit it.

Patching the OS and Services

Another method of protecting a secure content device is to apply patches to services and OSs. Many seasoned IT administrators have mixed feelings about patching. On the one hand, it is comforting to know that developers are continually correcting vulnerabilities, improving performance, and making other enhancements to their systems. On the other hand, many systems administrators have learned the hard way about dependencies between components. A critical business application may break after a service pack is applied because, in addition to patching a known vulnerability, the service pack might include dozens of other changes to code. Such is not the case with network appliances.

One of the benefits of network appliances is that they are strictly controlled by vendors. Every piece of software, every service that runs, and every dependency between modules is known and tested by the vendor before the appliance ships. The reduced flexibility to systems administrators is actually a benefit: the vendor only needs to support a small number of possible configurations.

Configuring Services to Reduce Vulnerabilities

The Bastille Hardening program has been used in secure content appliances, such as those provided by McAfee. This program analyzes a configuration and guides administrators through the hardening process. Bastille is a well-known and widely used hardening program for Linux and HP-UX and is recommended by the Center for Internet Security. Following Bastille recommendations can help reduce exposure to vulnerabilities in a number of areas including:

- Patches

- File permissions

- Account security

- Miscellaneous daemons

- Sendmail

- DNS

- Printing

How do appliances stay up to date on the latest threats?

Vendors typically provide frequent updates for secure content appliances. Virus developers and spammers change their techniques and content to avoid detection, but vendors keep abreast of these changes and update both signature files (virus definition and spam definition files) and the scanning engines that use those signature files. Fortunately, keeping the appliance up to date on the latest threats is a relatively simple matter because the appliance automates virtually all of the work.

Tracking Updates

The option Monitor | Updates tool allows administrators to set the schedule for updating both signature files and scanning engines.

Figure 3.10: The automatic update facility allows for separate scheduling of signature file (rules) and scanner (engine) updates.

The option Monitor | Updates displays information about the status of antivirus and anti-spam automatically scheduled updates. The display includes

- Names of scheduled updates

- Current status of each scheduled update

- Date and time of last update

Antivirus and anti-spam updates are configured separately.

Updating Antivirus Applications

Antivirus applications should be updated at least once per week but more frequently is preferable. Most antivirus designers and developers are regularly updating virus definition files to counter emerging threats. Although the application uses heuristic, or "rule of thumb," filters to catch a class of viruses as well as virus-specific rules to detect specific viruses, it is best to keep the virus definition files up to date to ensure new threats are consistently detected. When a virus spreads suddenly or can inflict significant damage, antivirus vendors will create extra definition files and release them as soon as possible to combat the spread of the virus.

In addition to keeping the virus definition file up to date, the appliance keeps the antivirus engine up to date. The engine is the program that reads the virus definition file and uses those definitions to identify viruses and clean infected files. The engine is updated to detect new types of viruses that do not have the same characteristics as older viruses. In general, the engine is updated every few months.

Update files can be downloaded to the appliance from three sources:

- An authorized vendor FTP site

- A proxy on the local area network (LAN), if the appliance is not configured to access external FTP sites directly

- A server within the network that has already downloaded the files

To keep up to date, use the Monitor | Status | General Information or the Monitor | Updates option to determine the current revision level of the antivirus engine and virus definition file.

Updating the Anti-Spam Application

Like the antivirus application, the anti-spam components of a secure content appliance are updated frequently by vendors. Both spam definition files and the anti-spam engine are kept up to date to counter changes in spamming practices.

New anti-spam definition files will contain rules to identify spam that may have slipped past earlier filters. In addition, extra rules are created for sudden widespread instances of spam that are otherwise not caught by existing filters. By keeping up to date on the latest virus and spam threats, secure content appliances are able to deploy appropriate countermeasures as new threats emerge.